Everything we know about Core Web Vitals and SEO

June 15th 2021: Multiple updates from Google I/O 2021, a Web Vitals Q&A session and another Google blog post.

April 19th 2021: Google have announced a delay to the rollout of the Page Experience update. The SEO changes will now gradually roll out from mid-June 2021.

April 13th 2021: Google have updated the CLS metric to better account for complex web applications. This should generally result in a slight improvement in reported CLS values.

March 30th 2021: Google have updated their FAQs page to confirm and clarify many of the details in this post!

In summary

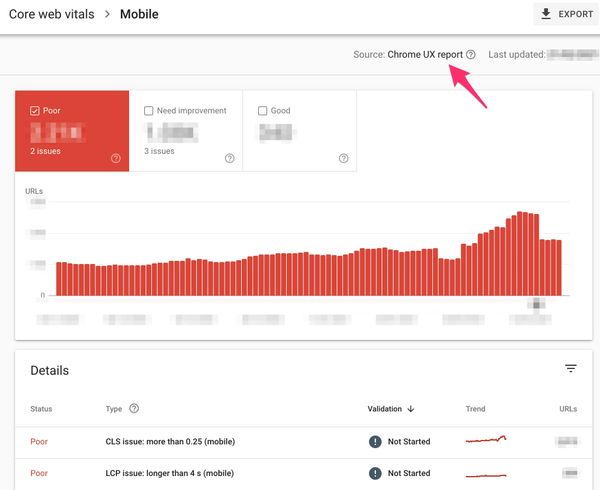

The update will roll out between July and August 2021, it will result in a positive ranking signal boost for page experience in mobile search only [1], for pages or groups of similar URLs [2], which perform well against the core web vitals targets. The impact of the signal is expected to be small [4]. Google has stated that the Page Experience update will roll out to desktop search at some point in the future.

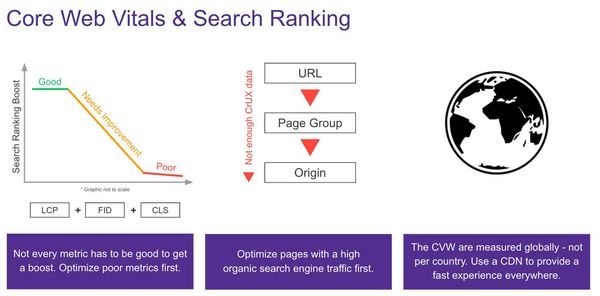

"Meeting the targets" means that the aggregate 75th percentile value, for a single URL (or across a 'similar' set of URLs when there is not enough data for an individual URL), meets the thresholds for 'good' shown below (2.5s for LCP, 100ms for FID and 0.1 for CLS). The ranking boost is calculated separately for each metric: with green giving the maximum boost, red giving no boost and amber giving some boost [3]. The data is aggregated from Google's private view of CrUX data (see Search console is the source of truth for details of this assumption).

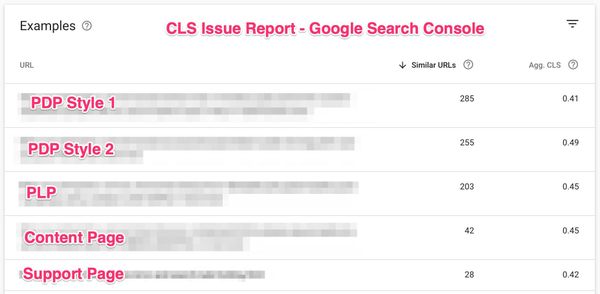

URLs can be grouped by similarity

As can be seen in Search Console, URLs are grouped by Google into similar sets of pages. The aggregate Core Web Vital scores shown here will likely be the ones which impact the Page Experience ranking factor, rather than individual URL performance. You can click on the 'Similar URLs' column in the view below to see up to 20 of the URLs Google has included in the group.

June 15th 2021: I now believe that URL groups are only used when there is not enough field data for a single URL. Run your page through Pagespeed Insights to see if there is enough data for your URL(s).

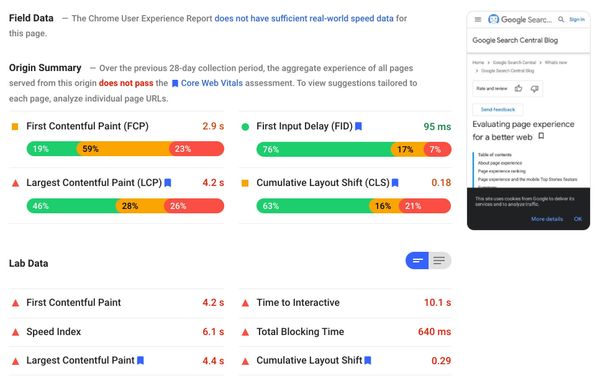

Field is more important than Lab

Field data - data collected from real users browsing pages - is the only source which will impact Page Experience [5]. Lab data - test data collected from emulated browsers - may not reflect the real user experience and has no bearing on SEO.

Any difference between lab and field results could be down to many factors relating to your traffic:

- Geographic user distribution and varying network quality (LCP)

- Varying layouts on different viewports (CLS)

- Varying mobile device specifications (FID)

Lab data is still useful when diagnosing an issue and confirming an improvement, but we must rely on field data for feedback on the actual user experience delivered. Note that the Lighthouse Score is calculated from lab data, not field! [6]

Search Console is the source of truth

Whilst Core Web Vitals values are available in a multitude of tools...

- Pagespeed Insights (lab and field)

- WebPageTest (lab)

- Real User Measurement tools like mPulse (field)

- Chrome UX Report (field)

...none of these directly reflect the values that will be used by search.

Pagespeed Insights' field data for a page is the closest you can get to the data used in search, outside of Search Console. Pagespeed Insights has the only public source of data at the page level and using the same 28-day rolling window as search - but the values shown are for the individual URL under test, not the aggregate of related pages as indicated in Search Console.

What we need to act upon now

The Page Experience Update is more of a carrot approach than stick - there is no direct penalty for failing to meet Google's goals. That said, meeting the target values for Core Web Vitals will inevitably result in an improved user experience. Use the tools available to determine which pages on your sites are falling behind, then get to work on improving them! I've written a post on diagnosing and improving Core Web Vitals which may help.

Remember that any changes you make to your page performance will take 28 days to fully reflect in Google's data - so don't expect immediate returns there.

Do we like Core Web Vitals?

I believe that Core Web Vitals are on balance a Good Thing™️:

- The vitals encourage us to focus on UX metrics, not just technical metrics

- The update builds a bridge between SEO, Tech SEO, Performance Marketing and Engineering

- Using field data increases focus on real user measurement, versus the more simple (and less representative) lab testing

- The targets start a good discussion around percentiles for field data - 75th is a good balance between the median (hard to impact) and the 90th / 95th (potentially subject to volatility)

There are a number of issues with Core Web Vitals though:

- The metrics are only measurable in Chromium-based browsers

- Ranking benefit is only determined by performance of visitors on Chrome browsers

- First Input Delay depends heavily on when (and if!) users interact with a page, making it less actionable than Total Blocking Time or maximum potential FID [7].

It's worth noting at this point that the targets for Core Web Vitals set out by Google are quite strict. Many Google pages do not achieve 'good' in all three, and very few production websites do. That said, it is possible to achieve green across-the-board without significant engineering work. In my opinion this makes them good targets: difficult but achievable.

Other SEO factors

AMP will no longer be a requirement for the Top Stories carousel in mobile search after the update, meaning all pages which meet the Google News content policies will be eligible for inclusion, with Core Web Vitals impacting ranking in a similar way to normal search. [8]

What is yet to be confirmed?

There are still a number of assumptions we are making, which are yet to be confirmed by Google:

- URLs are grouped for the purposes of the ranking signal, and origin summary used for new / ungrouped URLs. Can we control this grouping? It sometimes does not follow seemingly logical patterns (e.g. PDP and PLPs grouped together)

- How many experiences are included in the CrUX dataset - e.g. how many users opt-in to share usage statistics and what (if any) sampling is applied

- Is geography a factor, e.g. does the TTFB measured in a country determine page experience ranking in that country? (June 15th 2021: I believe geography is not a factor. Core Web Vitals are measured by country, but the ranking boost is global).

- CLS unfairly penalises single-page applications, there is a proposal to improve the metric but we do not know if this will be released before May. (June 15th 2021: Google has confirmed that they are using the new CLS metric - calculated by windows of up to five seconds throughout a page lifecycle. This is better for SPAs than before, but does not fix the fundamental issue: soft navigations / route changes are not accounted for correctly.)

I hope that these points are confirmed before the May 31st deadline, if nothing else but for clarity and transparency. It's hard to hit a target that you cannot see!

Am I missing something?

Probably! I have collected this information from videos, blog posts and tweets; trying to find a legitimate source of truth is disappointingly difficult. If you know of something different to the statements above (with a source) please let me know by email or twitter - I'm keen to update this page as we learn more.

Sources

- "At this time, using page experience as a signal for ranking will apply only to mobile Search" - Google Search Console Help. 12 March 2020. (back to text)

-

John Mueller in Google SEO office-hours on URL grouping: (back to text)

"... for example, to split out the forum from our site where we can tell, oh, slash forum is everything forum and it's kind of slow, and everything else that's not in slash forum is really fast. If we can recognize that fairly easily, that's a lot easier. Then we can really say, everything here in slash forum is kind of slow, everything here is kind of OK. On the other hand, if we have to do this on a per URL basis where ... we can't tell based on the URL if this is a part of the forum or part of the rest of your site, then we can't really group that into parts of your website. And then we'll be forced to take an aggregate score across your whole site and apply that appropriately. I suspect we'll have a little bit more information on this as we get closer to announcing or closer to the date when we start using Core Web Vitals in search, but it is something you can look at already a little bit in Search Console. There is a Core Web Vitals report there, and if you drill down to Individual Issues, you'll also see this your URL affects so many similar URLs. And based on that, you can already kind of tell, oh, is Google able to figure out that my forum is grouped together or is it not able to figure out that these belong together?"

- @JohnMu on Twitter. (back to text)

- "Ranking wise it's a teeny tiny factor, very similar to https ranking boost." (not directly related to Page Experience, but can we infer similarity) - Gary Illyes (Google's Webmaster Trends Analyst). 28 April 2020. (back to text)

- "Core Web Vitals are a set of real-world, user-centered metrics" - Google Search Central. 28 May 2020. (back to text)

- "The Performance score is a weighted average of the metric scores." - Lighthouse performance scoring. 12 June 2020. (back to text)

- "Since FID measures when actual users first interact with your page, it's more inherently variable than typical performance metrics. See Analyzing and reporting on FID data for guidance about how to evaluate the FID data you collect." - Max Potential First Input Delay. 16 October 2019. (back to text)

- "As part of this update, we'll ... remove the AMP requirement from Top Stories eligibility" - Evaluating page experience for a better web. 28 May 2020. (back to text)